Research visit during your PhD – a great way to enrich your scientific training

My research visit

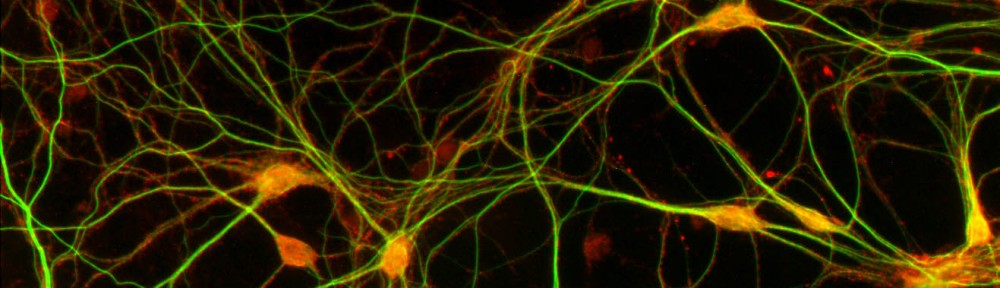

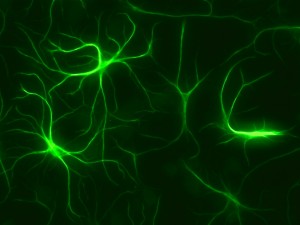

I am currently working as a visiting researcher as part of my PhD project at Maurice Wohl Clinical Neuroscience Institute at King’s College London. This is a buzzling neuroscience community that has both basic and clinical researchers. The institute is at the medical campus, and research is attempting to actively translate findings to patient care. I am working in the group of Dr. Sreedharan whose group is focusing on amyotrophic lateral sclerosis (ALS) and specifically molecular mechanisms of TDP-43 dysregulation in disease. My project here is to generate induced pluripotent stem cells (iPSCs) with MCM3AP mutations with CRISPR/Cas9 to model neuropathy in an isogenic background and its links to TDP-43. As part of my research visit, we have collaborated with Sanger Institute, where I generate mutant iPSCs. I have also been able to participate in ALS research projects and generate different in vitro neuronal models. Additionally, it has been useful to be trained in different methods such as high-content imaging and functional genomics methods.

How do you find a research visit?

My research visit was organized quite effortlessly, since our groups had existing collaboration. My supervisor had been in touch with my PI at King’s College about our related findings. They had identified in a fly motor neuron screen that our newly discovered human disease gene links to ALS protein TDP-43. Postdoc from his group had also visited our laboratory to do some preliminary studies on our patient fibroblasts. A year later, I went to an ALS conference to present my research, where I met with the PI and shortly after that he also came to give a talk at Biomedicum, when we also talked about the opportunity to go to his laboratory and to Sanger institute through his connection to generate iPS cells that was really important for my project progression.

If you don’t have existing collaborator, there are a few ways to organize your own research visit.

- Actively read and do research on your field.If you find an obvious or even an unexpected link to your work you might be able to invite them as a speaker and start your own collaboration.

- Go to conferences. Carefully selected international conferences may open a door to a new collaboration – but if you didn’t get a travel grant this year even national conferences are a great way to network and establish collaborations locally. This is a good way of strengthening your old collaborations, or you may meet new people, they might walk to your poster or talk and even offer to collaborate.

- Think of methods that would really enrich your researchor may even be necessary for completing it. Find experts in that, and contact them. You might be able to visit them.

- Talk to your supervisor. Your supervisor should agree to the visit early on and may even suggest what would be the most beneficial visit and whether it should be to enrich collaboration, learn about a specific aspect of your field or for methodological training.

Things to consider when planning a research visit

Firstly: practicalities of your project. Make sure to choose the time carefully, so that it won’t interfere with your other projects. For example it is quite easy if you are in the writing phase of an article to continue this, but challenging if you haven’t finished all the experiments. Second: costs. Living costs abroad may be higher, so consider applying for additional grants or make other arrangements so that you can finish your project successfully. Make sure that you agree with your employment contract/grant provider that research visit is a necessary part of your training. Also, make sure to complete all the necessary paperwork with the host institute well in advance. Finally: communication. Make sure to communicate about all the aspects of the planning with everyone in advance for a smooth start to your project. One way to stay in touch with your supervisor and group is to have regular online meetings through websites like appear.in or Skype. Research visit involves flexibility from all and mostly from you – be open to changes in plans.

What I have learned

My research visit experience has expanded my perspective in various ways. I have attended meetings and talks outside my immediate field which has enriched my general knowledge and allowed to make new contacts. The neuroscience community at King’s College is incredibly vibrant and talking to researchers from very different topics has broaden my view. It is an incredible privilege to get to experience a different research culture, meet people from different fields and to get new ideas on your project you’ve never thought of. Research visit really has enriched my experience, allowed collaboration with multiple institutes and improved my research. I would recommend it for anyone whether you are looking to expand your methodological knowledge, improve your current project through collaboration or expanding your knowledge of a different research area.

Most of all anyone can do it – with a little effort and planning!

Rosa Woldegebriel (MSc, PhD Candidate)

Brain & Mind PhD candidate at Tyynismaa lab, University of Helsinki

Visiting Researcher at Sreedharan lab, King’s College London