The following post has been written by Julia Poutanen, who recently got a BA in French Philology, and is now continuing with Master’s studies in the same field. She wrote this essay as part of the Digital Humanities studies and was gracious enough to allow us to publish it here. Thank you!

Essay on the Digitization of Historical Material and the Impact on Digital Humanities

On this essay, I will discuss the things that one needs to take into consideration when historical materials are digitized for further use. The essay is based on the presentation given on the 8th of December 2015 at the symposium Conceptual Change – Digital Humanities Case Studies and on the study made by Järvelin et al. (2015). First, I will give the basic information of the study. Then I will move on reflecting the results of the study and their impact. Finally I will try to consider the study when it comes to digital humanities, and the sort of impact it has on it.

The study

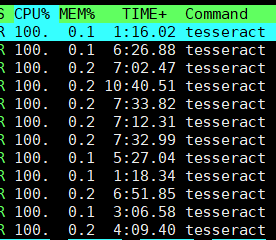

In order to understand what the study is about, I will give a little presentation of the background related to the retrieval of historical data, as also given in the study itself. The data used is OCRed, which means that the data has been transformed into a digital form via Optical Character Recognition. In this situation, the OCR is very prone to errors with historical texts for reasons like paper quality, typefaces, and print. Other problem comes from the language itself: the language is different from the one used nowadays because of the lack of standardization. This is why geographical variants were normal, and in general, there was no consensus on how to properly spell all kinds of words, which can be seen both in the variation of newspapers and in the variation of language use. In a certain way, historical document retrieval is thus a “cross-language information retrieval” problem. To get a better picture of the methods in this study, s-gram matching and frequency case generation were used with topic-level analysing. S-gram matching is based on the fact that same concept is represented by different morphological variants and that similar words have similar meanings. These variants can then be used in order to query for words. Frequent case generation on the other hand is used to decide which morphological forms are the most significant ones for the query words. This is done by studying the frequency distributions of case forms. In addition, the newspapers were categorized by topics.

The research questions are basically related more to computer science than to

humanities. The main purpose is to see if using methods with string matching is good in order to retrieve material, which in this case is old newspapers, with a very inflectional language like Finnish. More closely looked, they try to measure how well the s-gram matching captures the actual variation in the data. They also try to see which is better when it comes to retrieval: better coverage of all the word forms or high precision? Topics were also used to analyze if query expansion would lead to a better performance. All in all, the research questions are only related to computer science, but the humanities are “under” these questions. How does Finnish work with retrieving material? How has the language changed? How is the morphological evolution visible in the data, and how does this affect the retrieval? All these things have to be taken into consideration in order to answer to the “real” research questions.

The data used in this research is a large corpus of historical newspapers in Finnish

and it belongs to the archives of the National Library of Finland. Although in this specific research, they only used a subset of the archive, which includes digitized newspapers from 1829 to 1890 with 180 468 documents. The quality of the data varies a lot, which makes pretty much everything difficult. People can access the same corpus from the web in three ways – the National Library web pages, the corpus service Korp, and the data can be downloaded as a whole –, but not all of it is available to everyone. On the National library webpage, one can do queries with words from the data, but the results are page images. On Korp, the given words are seen in KWIC-view. The downloadable version of the data includes, for instance, metadata, and can be hard to handle on one’s own, but for people who know what they’re doing, it can be a really good source of research material. Since the word forms vary from the contemporary ones, it is difficult to form a solid list of query words. In order to handle this, certain measurements have to be done. The methods used are pretty much divided into three steps. First, they used string matching in order to receive lists of words that are close to their contemporary counterparts in newspaper collections from the 1800s. This was done quite easily since in 19th century Finnish, the variants, whether they are inflectional, historical or OCR related, follow patterns with same substitutions, insertions, deletions, and their combinations. Also, left padding was used to emphasize the stability of the beginnings of the words. This way, it becomes really clear that Finnish is a language of suffixes which mainly uses inflected forms as a way to express linguistic relations, leaving the endings of the words determining the meaning – and thus, in this case, the similarity of the strings. But to handle the noisiness of the data – as they call it –, they used a combination of frequent case generation and s-grams to cover the inflectional variation and to reduce the noisiness. This creates overlapping in s-gram variants and that is why it was chosen to add each variant only once into the query, which enhances the query performance, although the number of variants is lower. Second, they categorized these s-gram variants manually according to how far away they were from the actual words. Then, they categorized how relevant the variant candidates were and analyzed the query word length and the frequencies on the s-gram. Some statistical measurements were helpful in analyzing. It was important to see the relevance of these statistics since irrelevant documents naturally aren’t very useful for the users. That is why they also evaluated the results based on the topic levels, which helped them to see how well topics and query keys perform.

Continue reading →